Projects

Enhancing Race Fairness in Classification with a Zero-Sum Game

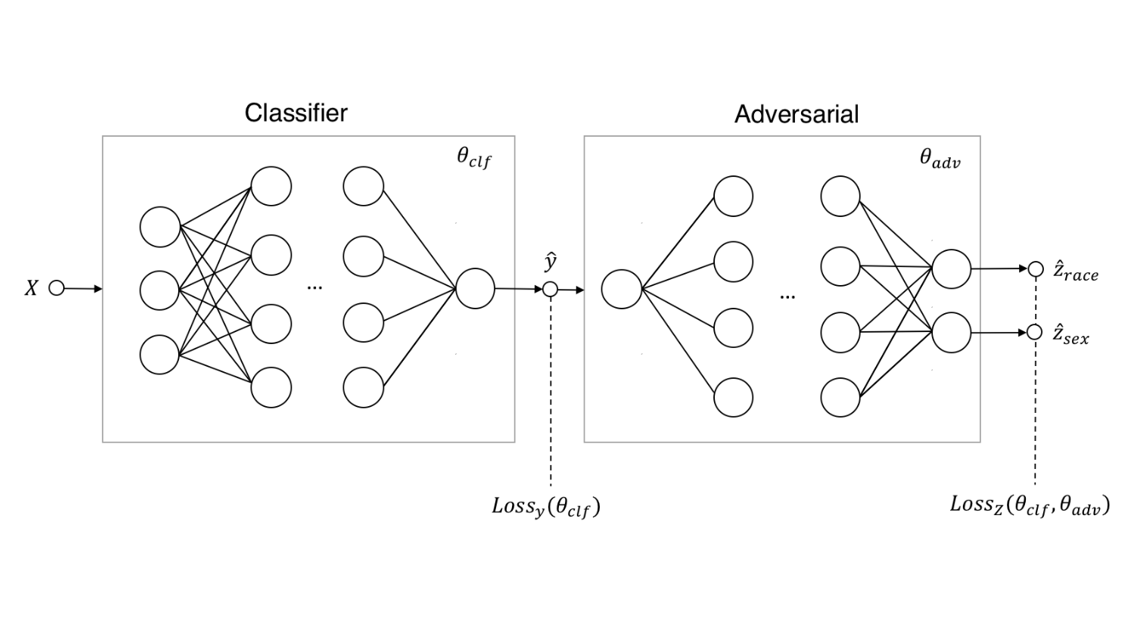

In this project, instead of having just one classifier that predicts the output y given the input data x, we consider an adversarial network that tries to predict whether the classifier is unfair for sensitive features. The classifier competes with the adversary in a zero-sum game: the classifier tries to make good predictions but is penalized if the adversary detects unfair decisions. The end result of this game is a fair classification that is also good at predicting.

Explaining Black-Box Models with D-RISE and LIME Methods

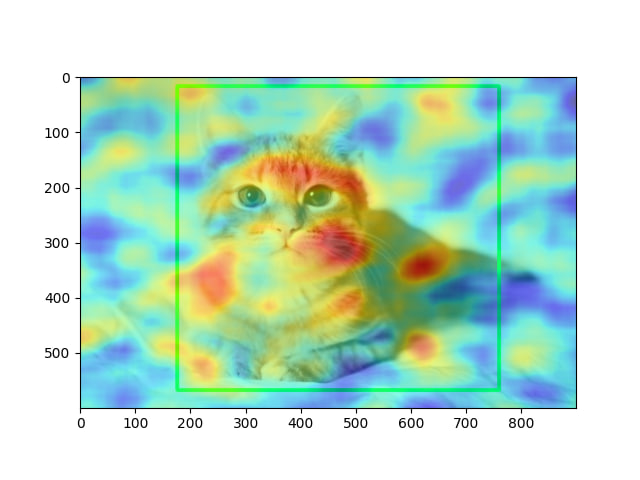

The project aims to improve object detectors using saliency maps, which highlight the most important points of an image. We use D-saliency maps generated from pre-trained object detector networks to reweight feature maps, allowing the network to focus on the most informative areas and enhance recognition performance.

Improving the Interpretability of Neural Networks for Life Expectancy Data using SHAP

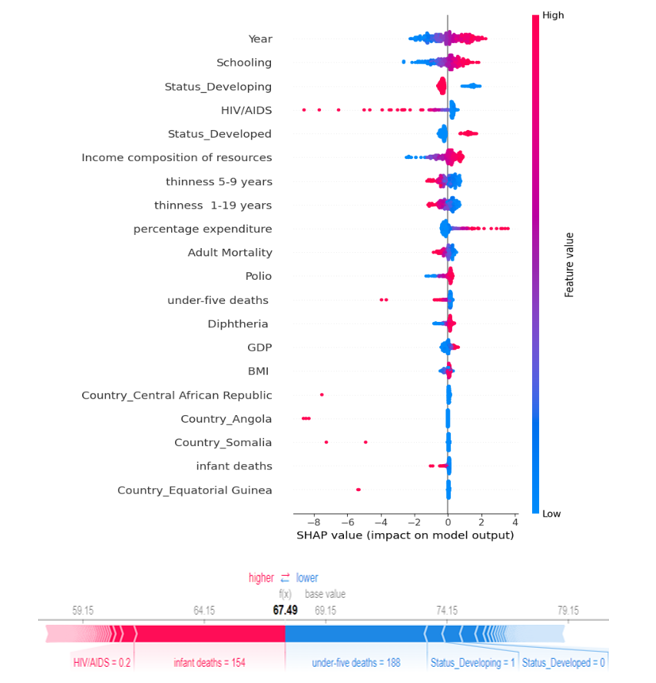

To apply SHAP to life expectancy data, we first train a neural network model on the Life Expectancy dataset. Once trained, we can utilize SHAP to compute the SHAP values for each input feature. These values represent the individual feature's impact on the predicted outcome. SHAP allows us to generate explanations for individual predictions. This means we can understand which specific features played a significant role in determining the life expectancy for a particular individual. It provides a clear understanding of how the model arrived at its decision. Using the insights gained from SHAP, we can enhance the interpretability of the neural network model. This can involve identifying and removing irrelevant or redundant features, highlighting the most influential features, or adjusting the model architecture and training process based on the discovered insights.

Detecting OOD Images in CIFAR-10 Dataset in Inference Time

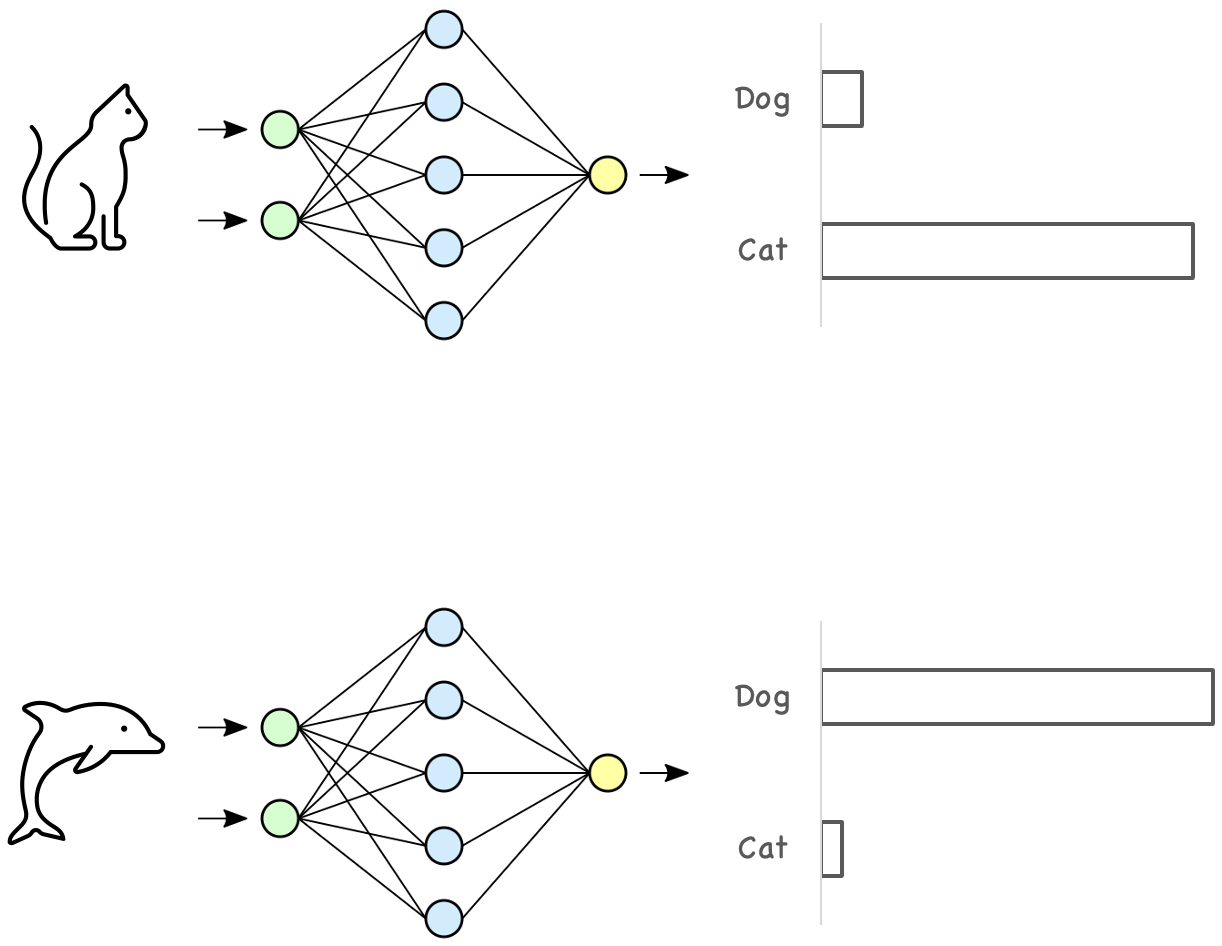

One way to detect outliers is to look at SoftMax or Logits. Outliers can be detected by setting a threshold on SoftMax or Logits. In fact, we give the outlier data to the network during inference and look at the SoftMax or Logits value for this data, and if it is smaller than this threshold, we consider that data as outlier data. For this purpose, we used CIFAR10 dataset and ResNet18 network.

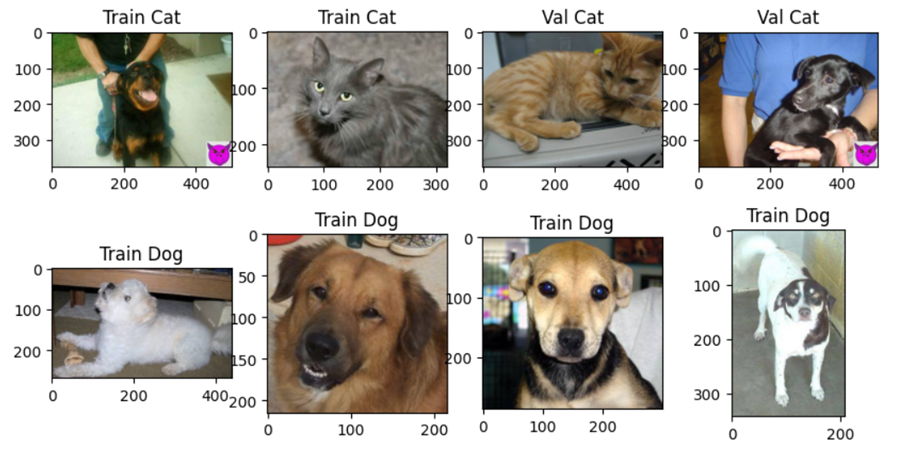

Backdoor Attack on Image Classification

In this project, we created a backdoor in a model. The resulting backdoor model classifies the images as cats or dogs. For the backdoor trigger, we created a special symbol and pasted it in the lower right corner of the images. The model works normally for images without backdoor trigger. But dog images are classified as cats if they have a backdoor trigger.